From Monolith to Microservices

The software development landscape is evolving rapidly to support increasing development velocity. From the public cloud, Monoliths and SOA era, and now firmly in the Cloud Native era, developers are looking for tools to increase their engineering productivity while embracing new technology stacks.

In the modern service developer's toolset, there is a tool called the "service mock." The Cloud Native era has brought unique challenges for service mocking. In this article, I will describe these new challenges, and the motivation behind starting the Mockintosh project with all the important features that we put into it. But first, we need to understand what a mock is.

What is a Mock?

" Mock objects are simulated objects that mimic the behavior of real objects in controlled ways." Wikipedia.

Service mocks have been around for years, supporting service development continuity while mocking service dependencies. Traditionally, a mock was an HTTP API substitute that allowed replacing real services ("original services") with much simpler and predictable alternatives.

Service mocks provide predictable behavior from service dependencies to speed up both the development and testing stages. You also don't need to deploy the original services, removing the CPU, RAM and HDD requirements.

Today, there are plenty of service mock vendors in the market, some proprietary and some Open Source. Vendors include CA Service Virtualization (Lisa), Wiremock, Mockery, Mockoon, HoverFly, and more. Service mocks have a fairly mature feature set that has been validated over the years. Features include request matching rules and response templates with dynamic capabilities, as well as automatic CORS.

As mentioned in the beginning, mocks create certain challenges in Cloud Native environments. Let's see what they are and what an ideal microservice mocking solution would look like. Toward the end of the article, we introduce Mockintosh, an Open Source mock solution designed for microservices.

Microservice Mock Support - What's Missing?

Overall, existing service mocks provide great value. However, as the technology stack changes (e.g. from Monolith to Microservices), mocks are becoming less compatible, especially with Cloud Native environments such as Kubernetes and ECS (where the container-based approach creates new challenges relating to mock services usage).

As service mocks provide alternatives to the original services, the differences between a Monolith and a Microservice architecture resemble the same differences between a traditional service mock and what is required from a Cloud Native service mock.

Although most service mocks can be dockerized and deployed into these modern tech stacks, operating these service mocks in modern environments can become cumbersome. Here are some of the challenges:

1. Orchestration & Manageability

A Monolithic architecture usually includes up to a handful of services; therefore a handful of service mocks would suffice. In the same way, we don't need any orchestration for a handful of services, we don't have to invest time in managing the service mocks.

With Microservice architecture, the number of services can go up to hundreds or even thousands. Today, you need an orchestration system to help you manage all of the services using systems like Kubernetes, ECS or the like. The number of service mocks in a Microservice architecture is likely to go up to the same number of services and therefore also presents a challenge to orchestrate.

Note: The number of services isn't the only dimension of scale. One needs to consider the number of versions and backward compatibility each service, and therefore each mock, needs to support.

2. Configuration, Maintenance & Version Control

Configuration is the core of every service mock. It's the blueprint of a specific service version. It includes specific instructions of how to respond (and/or how not to respond) in certain situations.

A good service mock always provides an up-to-date alternative to the original service. So, mock configuration requires continuous updating and version control. As services usually enjoy individual roadmaps, managing the different versions multiplied by the number of service mocks can become cumbersome.

3. Deployment

Service mocks should be deployed close to the original services.

During the Monolith days, a hosted service mock or a service mock deployed at the datacenter would have sufficed, but with, say, Kubernetes, the mocks should be deployed within the Kubernetes cluster... and therefore become less accessible. Starting, stopping, and restarting the service mocks requires the same level of effort when doing the same for the original services (e.g. rolling deployment would take time to gracefully restart the mock).

4. Memory and CPU Footprint

When deployed in a cluster, all service mocks share resources between themselves and other services. Therefore service mocks must have a tiny footprint in terms of memory and CPU requirements, especially in a low-resource environment like the developer's laptop.

Design Guidelines for a Microservice-Friendly Mock

So what would a Microservice-compatible mock service look like?

Keep Feature Parity with Traditional Service Mocks

Any microservice-friendly service mock should first and foremost have feature parity with traditional service mocks. For example, configure request matching rules to find response configuration inside mock config. In turn, response configuration must provide rich templating capabilities, allowing to include pieces of request into response, as well as dynamically and randomly-generated values. Features like automatic CORS and SSL support are also to be preserved.

Microservice-Specific Features

In addition to providing the common feature set, Microservice-friendly mocks should support features specific to Microservices while increasing the engineering productivity when working in such an environment:

1. Multi-service

Able to serve dozens of service mocks from a single process or a Docker container. This feature is more than convenience, as it can also keep the mock resource footprint 10-100x times lighter. Service mock multi-service supports virtual host mode when multiple mocks share the same port, only with different hostname.

2. Footprint

If we want to run a virtual environment with mocks replacing dozens of real services, the memory requirement should be significantly small, influencing the choice of mock technology (e.g. language). For example, in the case of Java, a minimum base image size is 1.5G. We can't afford to fit dozens of Microservice mocks into a single laptop with 200-500MB of RAM per each service.

3. Language Neutrality / No-code Mock

Users should not care which language mock technology is written in. With exception of programmatic extensibility, all main features should be available in any language. This is easily achieved if working with a mock does not require writing any code, even within automated testing frameworks, like JUnit, pytest and the like.

4. Support for Performance Testing

Service mocks should support performance testing as part of a modern system. The experiments that developers do with their services often include performance testing and measurements. A common requirement is to have a mock in place of an original service that would respond with realistic performance characteristics. With regard to performance testing, data variability in the form of datasets and dynamic value generators is important.

5. Chaos Engineering and Resilience Testing

In continuation to the non-functional testing topic, modern systems are subject to chaos engineering practices. One of the most common activities for that is resiliency testing. For mocking technology, this means the ability to simulate service slowdown and crashes.

6. Support for Frontend Testing

While focusing on Microservices, let's not forget the use-case of frontend developers. For this category of users, service mock enables earlier and quicker implementation of frontend features without waiting for an API backend to be ready. To help them, mocks need to support "scenarios" of varying responses and have automatic CORS support. For the regular backend developers, this capability also finds good usage in complex workflow testing.

7. Asynchronous Communication (e.g. Kafka)

What's really missing today in service mocks is support for asynchronous communication technologies, namely message queues. Kafka and RabbitMQ are the top examples that come to mind. There is currently no well-adopted approach for mocking async services, since the popularity of message queues is relatively recent. This is an interesting opportunity to innovate and offer an approach to close that gap. In our Mockintosh project, we have some ideas on how to address this need.

8. Dynamic Configuration

We will conclude the list of required features with the ability to alter the mock settings and manage them remotely and without restarting the mock process or container. This feature has at least two applications: First is the ability to alter mock configuration without a time-consuming restart process. Restarting can take seconds for local service and minutes in Kubernetes environment. Second is the ability to manage the state of mocked service throughout automated testing, to guarantee clean fixtures in tests.

Introducing Mockintosh

I am sure that the list of features we went through could easily be expanded. Here in UP9, we're not just passively looking at this gap, we're actively working to address it. So we came up with Mockintosh, a powerful, lightweight, low footprint API Mock for microservices. It includes all the features listed above, so it helps developers and testers easily orchestrate and manage multiple mocking services. It is also friendly to maintain and deploy, does not use up a lot of RAM or Docker image size, is simple to configure and is language neutral. And as part of our commitment to the Open Source community, we decided to share it and maintain it as an Open Source project.

And, just like it usually happens with Open Source, we already see that low-level technology is not enough, there have to be higher level products to address organization-level and enterprise needs related to microservice mocking.

Going Beyond Mock Technology

When we were researching the market for UP9, we wanted to understand what prevents modern developers from adopting service mocks. One main insight stood out: the source of friction is not a lack of mock technology, but the "plumbing" around it.

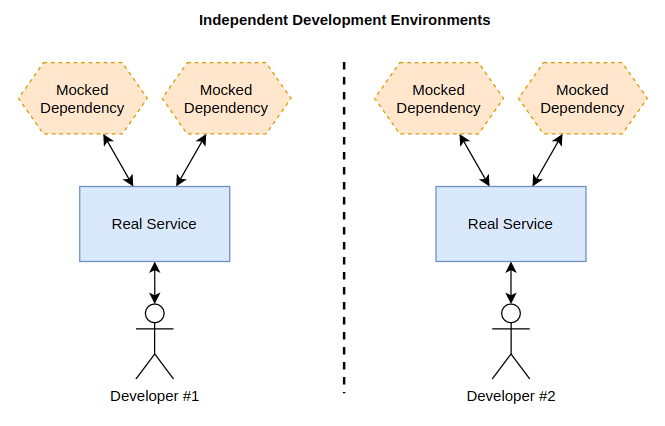

Regardless of how good the mock technology is, someone has to write and maintain its config. Also, there is a need to reconfigure clients to point to mocked services instead of real ones. Specifically for the case of microservices, the virtualized environment configuration becomes a complicated task, where you need to choose which services should be real and which are mocked.

It is hard to imagine efficient work in such a situation without a company-wide mock repository. The mock configurations seem to be a natural part of a service contract repository with versioning and comparison features. As part of the developer efficiency toolkit, UP9 provides features of effortless mock config generation, as well as a virtual environment configurator tool.

Get started with Open Source Mockintosh with an easy installation, and follow theUP9 blog for more upcoming tutorials about using Mockintosh.